Search in VR

UX Exploration

Today, searching in VR is a challenging task. This exploration aims to test out new UX concepts to improve text input for search.

The Problem

Text input is important in a number of scenarios such as searching for items, taking notes, etc. When we start up our VR experience, one of our first tasks is to find an app to launch either by browsing or searching. I was inspired to start this project when I noticed how much I struggled to search for apps in VR, and how unnatural it felt to input text. Is there a way to input text for a search that feels more natural and comfortable? That's the problem I'm trying to solve.

How does text input work in VR today? Most VR apps provide a giant digital QWERTY keyboard to input text, which makes sense because this is similar to how we input text in desktop and mobile. Users select characters either by

- Moving a laser pointer with our wrist/arm

- Pushing an analog stick to select (similar to the console solution)

- Hovering over a character and clicking a touchpad to select (e.g. SteamVR keyboard)

All three of these solutions work, but why does this feel awkward? A "natural" input solution doesn't require us to "think" about how to communicate the text. Handwriting feels "natural," because we have practiced it since we are children, so there is limited cognitive load for us to write. Typing on a physical keyboard is something many people today have adapted, and it is well designed for fast and accurate input, so that feels pretty good

The SteamVR keyboard is an example of a VR keyboard that feels unnatural. There are two modes of input: one with a laser pointer, and the other via the touchpad. Using a laser pointer is challenging because it requires a lot of movement both for your arms and eyes relative to the mouse. And people are not used to fine grained movements with a controller, so it takes time to select specific characters.

As for the dual touchpad input method, it's awkward because this is a new way of input to many of us and it's a bit confusing. The keyboard is split in half with the left side of the keyboard controlled by the left touchpad, and the right by the right touchpad. We don't frequently interact with a split keyboard and it's not clear why each touchpad can only interact with that specific set of keys. Yes the iOS keyboard can be split, but it is not the default state and the physical separation of the keyboard makes more sense because your hands are also physically separated in the same way.

I can imagine users acclimating to the SteamVR keyboard to a degree at some point. Is there a more natural form of input for text though? For this exercise, I scoped the problem to text input for search queries.

The Idea

What if users can write their search? We've been writing since we were kids, so we are very familiar with this form of input, which makes it easier to pick up. Handwriting certainly won't win in any speed competition, but with autocomplete smartness the length of search queries doesn't have to be very long.

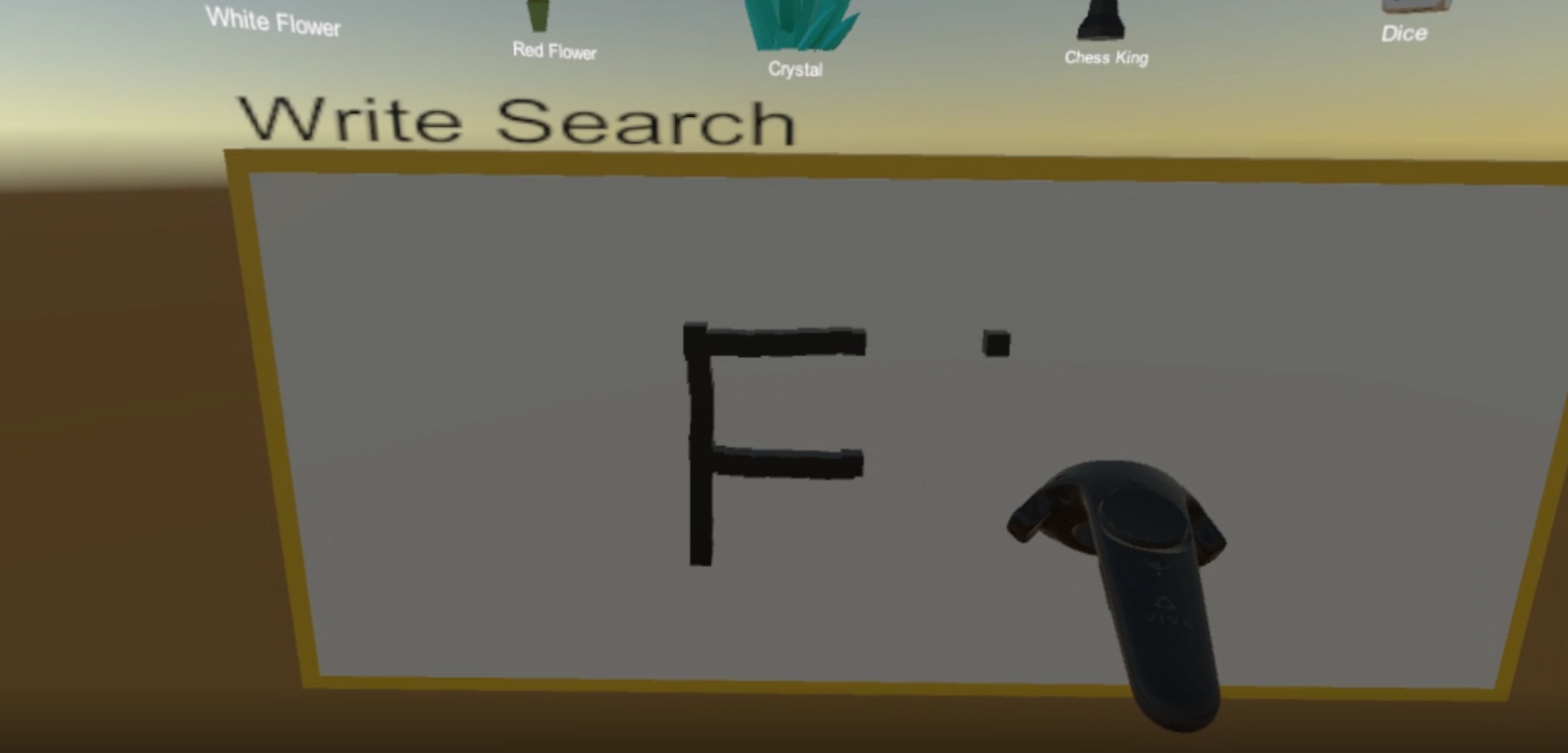

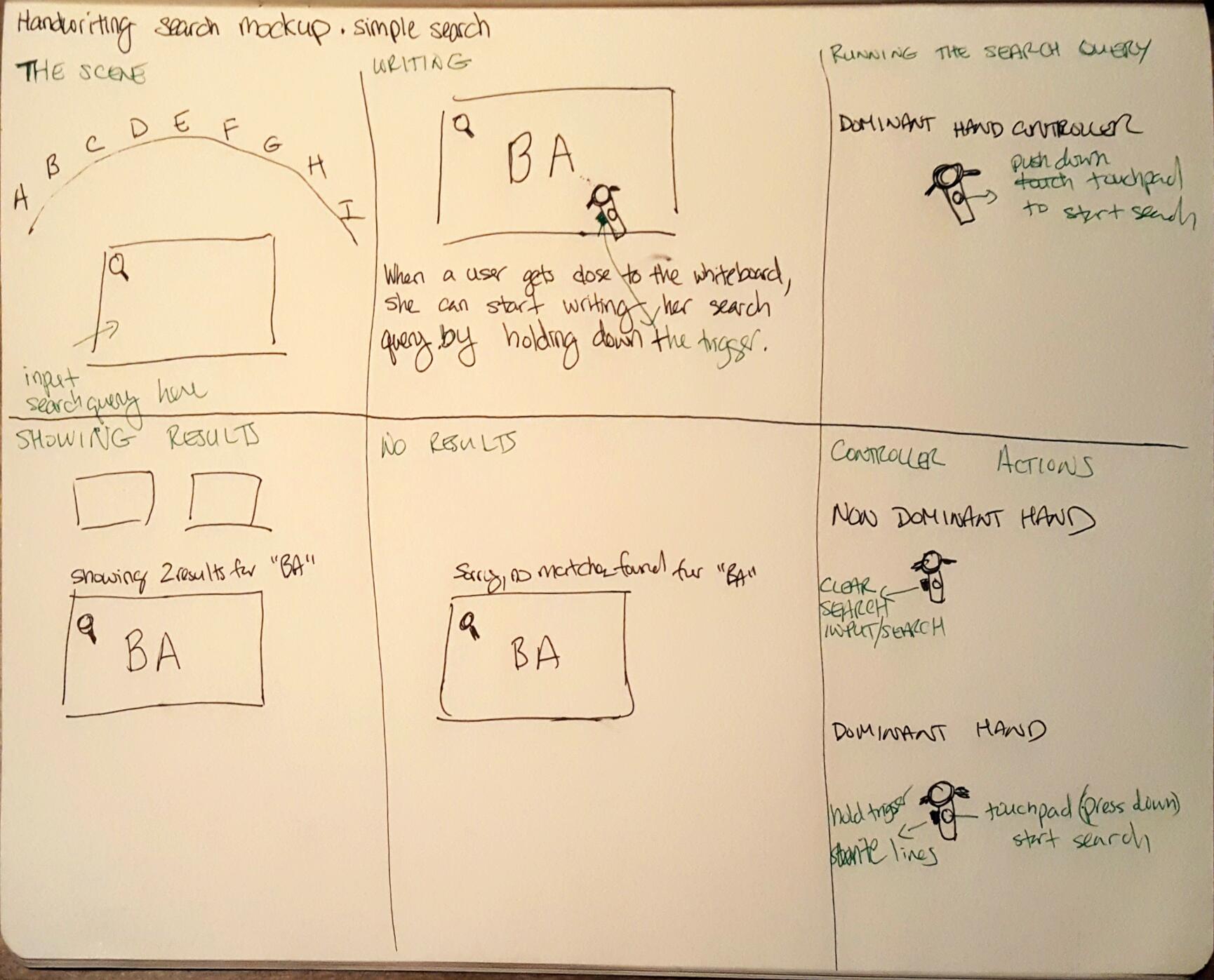

To test this idea I built a basic prototype with Unity. The scene is set up so that you can search through a store catalog. By writing letters on a whiteboard, I run OCR and use that as your input query, and return results. I had a few users test this prototype and they agreed that this form of input felt more "natural" than the digital keyboard they have experienced on VR so far. However, there were times where the OCR would not recognize the writing correctly and rewriting the query can be frustrating.

If you would like to try the prototype, you can find the code here. This is built with Unity 5.5 intended for HTC Vive. Please note this is a prototype! A few notes about the build: (1) A "flash" happens after you hit search because I switch to another camera to take a screenshot of the whiteboard before switching back to the headset. (2) The position of the whiteboard and catalog does not update based on the user's height. (2) The "writing" is implemented with cubes to simplify the work, but this hurts the OCR results

Final Thoughts

Using handwriting for search input is easier for users to pick up because we don't need to relearn new mechanisms. The biggest area of concern is how accurate the OCR results are. It is frustrating if the OCR misinterprets your input. Certainly with keyboard users will also make mistakes typing the wrong character. I suspect mistakes due to OCR will be higher than typing mistakes, so the question is does increasing the feeling of natural input overcome the decrease in accuracy. It's hard to say without more data, but my intuition says that is probably not the case. In the future, this may change.

For next steps, I plan on exploring other UX concepts such as voice input or other types of keyboards. There is a lot more work to be done here!